Boost Query Speed in MongoDB Atlas with Denormalized Data Models

In MongoDB, denormalization offers a powerful way to accelerate query performance. By storing related data together, you can eliminate the need for complex JOIN operations, which are often computationally expensive. This approach ensures faster read operations, making it ideal for applications where quick data retrieval is critical. Denormalized data models also simplify query logic, allowing your database to handle requests more efficiently. While this strategy enhances performance, understanding its nuances helps you make informed decisions for your specific use case.

Key Takeaways

Denormalization accelerates query performance by consolidating related data into a single document, eliminating the need for complex JOIN operations.

This approach is particularly beneficial for read-heavy applications, such as e-commerce and social media, where quick data retrieval is essential.

Properly structuring embedded documents and leveraging MongoDB's indexing features can significantly enhance query speed and reduce latency.

While denormalization improves performance, it also increases storage requirements and data redundancy, necessitating careful management of data consistency.

Utilize MongoDB Atlas tools like the Performance Advisor to monitor and optimize query performance, ensuring your database remains efficient.

Evaluate your application's needs to determine when to use denormalization versus normalization, balancing performance with data integrity and storage costs.

Understanding Denormalized Data Models in MongoDB

What is Denormalization in MongoDB?

Denormalization in MongoDB involves structuring your database to store related data together within a single document. This approach reduces the need to retrieve data from multiple collections, which can slow down query performance. By embedding related fields or arrays into a single document, you can access all necessary information with fewer queries. This method aligns well with MongoDB's document-oriented design, where each document represents a self-contained unit of data.

For example, instead of storing customer details and order history in separate collections, you can embed the order history directly within the customer document. This structure eliminates the need for complex operations like JOINs, which are common in relational databases. Denormalization simplifies data retrieval and enhances query speed, making it a preferred choice for applications requiring real-time performance.

How Denormalization Aligns with MongoDB Atlas Architecture

MongoDB Atlas, a Database-as-a-Service (DBaaS) solution, is designed to support denormalized data models effectively. Its architecture leverages the benefits of denormalization by enabling faster reads and writes. Features like Global Clusters allow you to perform low-latency operations from anywhere, ensuring optimal performance even for geographically distributed applications.

The document model in MongoDB Atlas complements denormalization by allowing you to store related data in a single document. This compatibility reduces the overhead of managing multiple collections and simplifies your MongoDB schema design. Additionally, MongoDB Atlas provides tools for indexing and query optimization, which further enhance the performance of denormalized structures.

Comparing Normalized and Denormalized Data Models

Normalized and denormalized data models serve different purposes. Normalization focuses on reducing redundancy by splitting data into multiple collections, which ensures data integrity and minimizes storage requirements. However, this approach often requires complex queries to retrieve related information, leading to slower performance.

In contrast, denormalization prioritizes query speed by consolidating related data into a single document. While this increases redundancy and storage usage, it significantly improves read performance. For instance:

Normalized Model: A product catalog might store product details in one collection and reviews in another. Queries would require multiple lookups to combine this data.

Denormalized Model: The same catalog could embed reviews directly within the product document, enabling faster and simpler queries.

Choosing between these models depends on your application's needs. If you prioritize performance and simplicity, denormalization is often the better choice, especially when using MongoDB Atlas.

How Denormalized Data Models Accelerate Query Performance

Eliminating Complex JOIN Operations

In traditional relational databases, retrieving related data often requires JOIN operations. These operations combine data from multiple tables, which can lead to slow queries, especially as the dataset grows. With database denormalization, you eliminate the need for these complex JOINs by storing related data together in a single document. This approach reduces the computational overhead and accelerates query performance.

For example, instead of querying separate collections for customer details and their order history, you can embed the order history directly within the customer document. This structure allows you to fetch all necessary information in one query, significantly improving performance. By avoiding JOINs, you simplify your queries and reduce the time your database spends processing them.

"Denormalization improves query performance by reducing the number of joins needed to fetch the data and by using precomputed fields." – Capella Solutions Blog

This principle aligns perfectly with MongoDB's document-oriented design. By leveraging denormalization, you ensure that your application retrieves data faster, even under heavy workloads.

Enhancing Read Performance and Reducing Latency

Denormalization directly impacts read performance by minimizing the number of queries required to retrieve data. When you store related fields together, your database can access all relevant information in a single operation. This efficiency reduces latency, ensuring that your application delivers results quickly.

For read-heavy applications, such as e-commerce platforms or social media networks, this improvement is critical. Users expect instant responses, and slow queries can lead to poor user experiences. By adopting a denormalized structure, you optimize your database for fast reads, meeting the demands of modern applications.

Additionally, MongoDB Atlas enhances this process with features like Global Clusters. These clusters allow you to perform low-latency operations across different regions, further boosting performance. Combining denormalization with MongoDB Atlas ensures that your queries run efficiently, regardless of geographic distribution.

Simplifying Query Logic for Faster Execution

Denormalization not only accelerates query performance but also simplifies query logic. When you consolidate related data into a single document, your queries become more straightforward. Instead of writing complex queries to join multiple collections, you can use simple queries to retrieve the data you need.

For instance, consider a social media application where user profiles and posts are stored together. A single query can fetch both the user's details and their posts, eliminating the need for additional lookups. This simplicity reduces the chances of errors in query design and improves overall database performance.

Moreover, simplified queries are easier to optimize. You can leverage indexing on frequently queried fields to further enhance performance. By combining denormalization with proper indexing strategies, you create a database structure that supports fast and efficient data retrieval.

"Denormalization involves duplicating fields or deriving new fields from existing ones to improve read performance and query performance." – MongoDB Blog

By adopting these practices, you ensure that your database remains responsive, even as your application scales.

Tradeoffs of Using Denormalized Data Models

Managing Data Redundancy

When you use database denormalization, you intentionally duplicate data across multiple documents. This redundancy simplifies queries and accelerates performance, but it introduces challenges. Managing redundant data requires careful planning to avoid inconsistencies. For example, if you update a piece of information in one document, you must ensure the same update occurs in all other instances where that data exists. Failing to do so can lead to discrepancies, which may affect the reliability of your database.

To address this, you should implement automated processes or application logic to synchronize updates across duplicates. MongoDB provides tools like triggers and change streams to help you manage these updates efficiently. By maintaining consistency in redundant data, you can enjoy the benefits of denormalization without compromising data integrity.

Addressing Increased Storage Requirements

Denormalization often increases storage requirements because the same data gets stored in multiple places. While this tradeoff improves query performance, it can strain your storage resources, especially as your database grows. For instance, embedding related data within documents results in larger document sizes, which may impact storage costs and scalability.

To mitigate this, you should evaluate your application's storage needs and prioritize critical use cases for denormalization. Compressing data or archiving less frequently accessed information can help optimize storage usage. MongoDB Atlas offers features like tiered storage, which allows you to balance performance and cost by storing less critical data on lower-cost storage tiers.

Ensuring Data Consistency Across Duplicates

Maintaining data consistency becomes more complex with denormalization. When the same data exists in multiple locations, any change must propagate to all instances to prevent inconsistencies. For example, if a customer's address changes, you need to update every document containing that address. This process can become cumbersome without proper mechanisms in place.

You can use MongoDB's atomic operations to ensure updates occur consistently within a single document. For cross-document updates, consider implementing application-level logic or using MongoDB's distributed transactions. These strategies help you maintain consistency while leveraging the performance benefits of denormalization.

"Database denormalization is a widely used technique to improve database query performance for faster data access." – Modern Analytics Insights

By understanding and addressing these tradeoffs, you can make informed decisions about when and how to use denormalized data models. Proper planning ensures that you maximize the advantages of denormalization while minimizing its challenges.

Best Practices for Optimizing Performance with Denormalized Data Models

Identifying Suitable Use Cases for Denormalization

To optimize your MongoDB database, you must first identify scenarios where database denormalization provides the most value. Applications that prioritize fast read operations, such as e-commerce platforms or social media networks, benefit significantly from denormalized data models. These systems often require quick access to related data, making denormalization a practical choice.

For example, in an e-commerce application, embedding order details within customer documents reduces the need for multiple queries. This structure accelerates data retrieval and enhances user experience. Similarly, in a social media platform, storing user profiles and their posts together simplifies queries and improves performance.

However, not all use cases suit denormalization. Write-heavy applications or those requiring strict data consistency may face challenges. Evaluate your application's requirements carefully to determine if denormalization aligns with your goals.

Structuring Embedded Documents for Related Data

Properly structuring embedded documents is essential for effective database denormalization. Embedded documents allow you to store related data within a single document, reducing the need for complex queries. This approach aligns with MongoDB's document-oriented design, enabling faster access to related information.

When embedding data, focus on logical groupings. For instance, in an IoT application, you can embed sensor readings within device documents. This structure ensures that all relevant data resides in one place, simplifying data retrieval. Avoid over-embedding, as excessively large documents can impact performance and storage efficiency.

MongoDB's aggregation pipeline further enhances the utility of embedded documents. By using the pipeline, you can process and transform data efficiently. For example, you can aggregate sales data embedded within product documents to generate real-time insights. This combination of embedding and aggregation pipeline ensures optimal performance for read-heavy applications.

Leveraging Indexing to Improve Query Speed

Indexes play a critical role in improving query speed within denormalized data models. By creating indexes on frequently queried fields, you enable MongoDB to locate data quickly without scanning entire collections. This strategy significantly enhances performance, especially for large datasets.

MongoDB Performance Expert: "Indexes hold the data on the indexed field in an easy-to-traverse, ordered form. When a query is executed, if an appropriate index exists, the database can operate on the indexes rather than doing a scan through all documents to find the matching subset of documents."

For example, in a denormalized e-commerce database, indexing the "customer_id" field within order documents allows you to retrieve all orders for a specific customer efficiently. Similarly, in a social media application, indexing the "user_id" field within embedded posts ensures fast access to a user's activity.

While indexes improve performance, use them judiciously. Excessive or improperly configured indexes can slow down write operations and increase storage requirements. Regularly monitor your database and adjust indexes based on query patterns to maintain optimal performance.

Monitoring and Tuning Query Performance in MongoDB Atlas

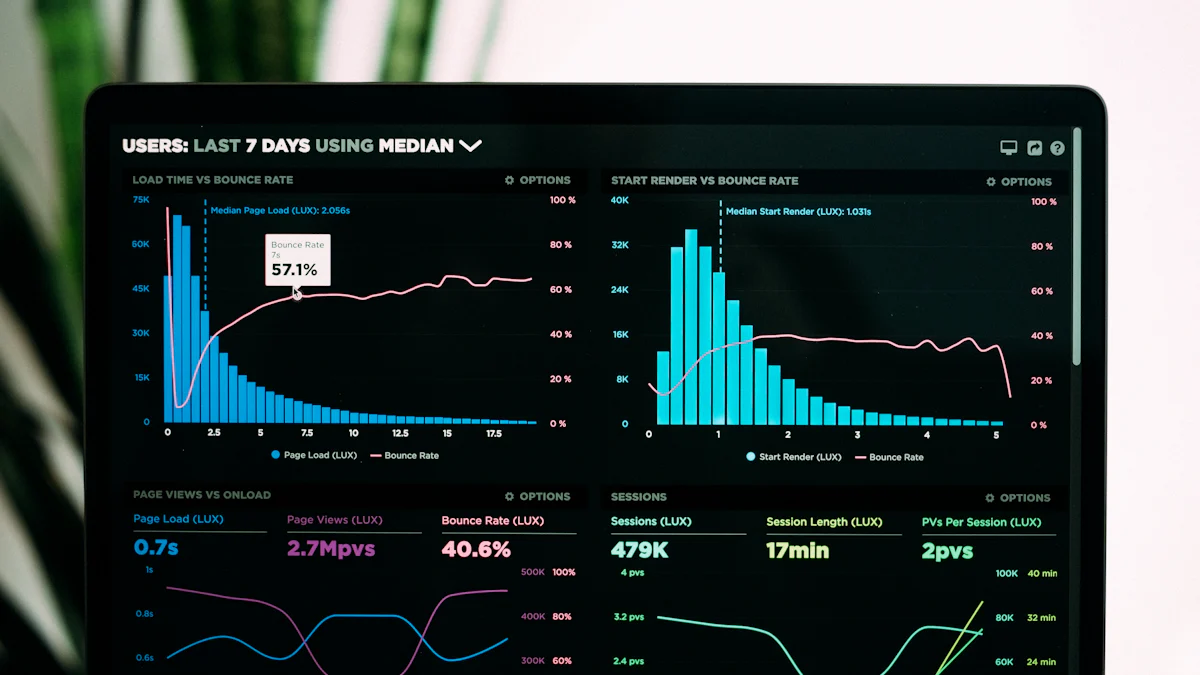

Monitoring and tuning query performance in MongoDB Atlas ensures your database operates at peak efficiency. MongoDB Atlas provides tools to help you identify and resolve performance bottlenecks. By actively monitoring slow queries and optimizing them, you can maintain a responsive and efficient database.

Start by using the Performance Advisor in MongoDB Atlas. This tool automatically detects slow-running queries and suggests improvements. It analyzes query patterns and recommends creating or modifying indexes to enhance performance. For example, if a query frequently scans a large collection, the Performance Advisor might suggest adding an index to the queried field. This adjustment reduces the time required to locate data, ensuring faster query execution.

"Indexes are essential for improving query speed. They allow the database to locate data efficiently without scanning entire collections." – MongoDB Documentation

To further optimize performance, leverage real-time indexing. MongoDB Atlas continuously updates indexes as data changes, ensuring that queries always use the most up-to-date index structures. This feature is particularly useful for applications requiring real-time data access, such as e-commerce platforms or IoT systems. By maintaining accurate and current indexes, you minimize query latency and improve user experience.

In addition to indexes, use the aggregation pipeline to streamline data processing. The aggregation pipeline allows you to transform and analyze data within the database, reducing the need for complex application-side logic. For instance, you can use the pipeline to calculate sales trends or generate reports directly from embedded documents. This approach not only simplifies your queries but also enhances performance by reducing the workload on your application.

MongoDB Atlas also supports automatic query optimization. The database periodically evaluates different query execution plans and selects the most efficient one. This process ensures that your queries run as quickly as possible, even as your data grows or query patterns change. Regularly reviewing query performance metrics helps you identify areas for improvement and make necessary adjustments.

To maintain optimal performance, follow these steps:

Monitor Slow Queries: Use the Performance Advisor to identify queries that take longer than expected.

Optimize Indexes: Create or modify indexes based on query patterns and recommendations.

Leverage Aggregation: Use the aggregation pipeline to process data efficiently within the database.

Review Query Plans: Periodically check query execution plans to ensure they remain efficient.

By actively monitoring and tuning your database, you can ensure that MongoDB Atlas delivers consistent and reliable performance. These practices help you meet the demands of modern applications while maintaining a seamless user experience.

Practical Applications of Denormalized Data Models

E-commerce: Embedding Product and Order Data

In e-commerce, speed and efficiency are critical for delivering a seamless shopping experience. By embedding product and order data into a single document, you can streamline your database operations. For instance, instead of maintaining separate collections for products and orders, you can store order details directly within the product documents. This structure allows you to retrieve all relevant information about a product and its associated orders in one query.

Imagine a scenario where a customer browses a product catalog. With a denormalized model, you can quickly display product details, pricing, and recent purchase history without performing multiple queries. This approach not only accelerates query performance but also simplifies your application logic. By embedding related data, you reduce the need for complex joins, ensuring that your e-commerce platform remains responsive even during peak traffic.

"Denormalized data models allow applications to retrieve related data. A denormalized data model with embedded data combines all related data."

This method is particularly effective for real-time inventory management. When a customer places an order, the system can instantly update the stock count within the same document. This real-time capability ensures accurate inventory tracking and enhances the overall user experience.

Social Media: Storing User and Post Data Together

Social media platforms thrive on real-time interactions. Users expect instant access to profiles, posts, and comments. By embedding user and post data into a single document, you can meet these expectations. For example, you can store a user's profile information alongside their posts and activity history. This structure enables you to fetch all relevant data in one query, reducing latency and improving performance.

Consider a scenario where a user views another user's profile. With a denormalized model, you can display the profile details, recent posts, and engagement metrics without querying multiple collections. This approach not only speeds up data retrieval but also simplifies your query logic. By embedding related data, you ensure that your platform remains fast and efficient, even as the user base grows.

"Denormalization simplifies your application code by providing a more intuitive and straightforward data model. It eliminates the need for intricate joins, making it easier to fetch and manipulate data."

Real-time notifications are another area where denormalization shines. When a user likes or comments on a post, the system can instantly update the relevant document. This real-time capability ensures that users receive timely updates, enhancing their engagement with the platform.

IoT: Combining Sensor and Device Data for Real-Time Queries

The Internet of Things (IoT) relies heavily on real-time data processing. Devices and sensors generate vast amounts of data that must be analyzed and acted upon instantly. By embedding sensor and device data into a single document, you can optimize your database for real-time queries. For example, you can store a device's metadata alongside its sensor readings. This structure allows you to retrieve all relevant data in one query, enabling faster decision-making.

Imagine a smart home system where sensors monitor temperature, humidity, and motion. With a denormalized model, you can combine all sensor readings into a single document for each device. This approach simplifies data retrieval and ensures that the system can respond to changes in real time. For instance, if the temperature exceeds a certain threshold, the system can immediately trigger an alert or adjust the thermostat.

"Denormalization improves query performance by reducing the number of joins needed to fetch the data and by using precomputed fields."

Real-time analytics is another critical application of denormalization in IoT. By embedding data, you can perform aggregation and analysis directly within the database. This capability allows you to generate insights and take action without relying on external processing. For example, you can calculate average sensor readings or detect anomalies in real time, ensuring that your IoT system remains efficient and responsive.

When to Avoid Denormalization in MongoDB

Scenarios Favoring Normalized Data Models

While denormalization enhances query performance, certain scenarios make normalized data models a better choice. Normalization minimizes redundancy by organizing data into separate collections. This structure ensures data consistency and reduces storage requirements, making it ideal for applications where data integrity is critical.

For instance, financial systems often require strict accuracy. In such cases, normalized models prevent discrepancies by storing each piece of data in one location. If you update a record, the change reflects across the system without requiring multiple updates. This approach eliminates the risk of inconsistent data, which is crucial for maintaining trust and reliability.

Another scenario involves write-heavy applications. Normalized models excel in environments where frequent updates occur. By reducing redundancy, they minimize the number of records that need modification. This efficiency improves write performance and reduces the likelihood of errors during updates.

"Normalization ensures data integrity by reducing redundancy, while denormalization adds redundant data to improve read performance."

You should also consider normalized models when storage costs are a concern. Denormalization increases storage usage due to data duplication. For applications with limited resources, normalized structures provide a cost-effective solution without compromising functionality.

Balancing Performance with Data Integrity and Storage Costs

Choosing between normalization and denormalization requires careful evaluation of your application's priorities. While denormalization optimizes queries by consolidating related data, it introduces redundancy. This redundancy can lead to challenges in maintaining data consistency and managing storage efficiently.

To strike the right balance, assess your application's primary needs:

Data Integrity: If your application demands high accuracy, prioritize normalization. For example, healthcare systems rely on precise data to ensure patient safety. Normalized models reduce the risk of errors by maintaining a single source of truth for each data point.

Performance: For applications requiring fast data retrieval, denormalization offers significant advantages. E-commerce platforms, for instance, benefit from embedding product and order details together. This structure accelerates queries and enhances user experience.

Storage Costs: Evaluate the tradeoff between performance and storage. Denormalization increases storage requirements, which may not be feasible for all applications. Consider using tiered storage solutions to manage costs effectively while leveraging denormalization where necessary.

"Balancing normalization and denormalization is crucial for optimizing databases."

By understanding these factors, you can make informed decisions about when to use normalized or denormalized data models. MongoDB provides the flexibility to implement either approach based on your specific needs. Whether you prioritize performance, integrity, or cost, aligning your database design with your application's goals ensures optimal results.

Denormalized data models offer a practical way to boost query performance in MongoDB. By consolidating related data, you can achieve faster read operations and simplify query logic. This approach can improve query response times by up to 100% in some cases, making it ideal for applications requiring quick data access. However, you must carefully weigh the tradeoffs, such as increased storage needs and data redundancy. By following best practices and leveraging MongoDB Atlas features like indexing and monitoring tools, you can optimize performance while maintaining data integrity and reliability.

FAQ

What is denormalization in MongoDB?

Denormalization in MongoDB involves consolidating related data into a single document. This approach reduces the need for multiple queries and eliminates costly JOIN operations. By embedding or duplicating data, you can optimize query performance and simplify data access. For example, instead of storing user profiles and their posts in separate collections, you can embed posts directly within the user document.

When should you consider using denormalization?

You should consider denormalization when performance is your top priority, especially for read-heavy applications. If your queries frequently access related data together, denormalization can simplify the process and improve speed. For instance, e-commerce platforms or social media applications benefit from embedding related data to deliver faster results. However, always weigh the benefits against potential challenges like data redundancy.

How does denormalization improve query performance?

Denormalization improves query performance by reducing the number of queries needed to retrieve data. It eliminates the need for complex JOINs, which can slow down performance as datasets grow. By storing related data together, you enable your database to fetch all necessary information in a single query. This approach is particularly effective for applications requiring real-time responses.

Example: In an IoT system, embedding sensor readings within device documents allows you to retrieve all relevant data in one query, ensuring faster decision-making.

What are the tradeoffs of using denormalized data models?

While denormalization boosts query speed, it introduces tradeoffs like increased storage requirements and data redundancy. Storing duplicate data across documents can lead to consistency challenges. For example, updating a customer's address in one document requires updating all other instances where that address exists. Proper planning and tools like MongoDB's triggers or change streams can help manage these challenges effectively.

Is denormalization suitable for all applications?

No, denormalization is not suitable for every application. Write-heavy applications or those requiring strict data consistency often perform better with normalized models. For example, financial systems prioritize accuracy and integrity, making normalization a better choice. Evaluate your application's needs carefully before deciding on a data model.

How do you decide between normalization and denormalization?

To decide, consider your application's priorities:

Performance: Choose denormalization for fast data retrieval in read-heavy applications.

Data Integrity: Opt for normalization when accuracy and consistency are critical.

Storage Costs: Use normalization if storage resources are limited, as denormalization increases storage usage.

Balancing these factors ensures your database design aligns with your goals.

Can denormalization help with reporting and analytics?

Yes, denormalization can significantly enhance reporting and analytics. By precomputing commonly-needed values and embedding them within documents, you reduce the time required to generate reports. For example, storing aggregated sales data directly in product documents allows you to quickly analyze trends without querying multiple collections.

What are some best practices for implementing denormalization?

To implement denormalization effectively:

Identify use cases where it provides the most value, such as read-heavy applications.

Structure embedded documents logically to group related data.

Use indexing on frequently queried fields to maintain performance.

Monitor and tune query performance regularly using tools like MongoDB Atlas's Performance Advisor.

These practices ensure you maximize the benefits of denormalization while minimizing its challenges.

How does denormalization align with MongoDB's architecture?

MongoDB's document-oriented architecture complements denormalization. It allows you to store related data in a single document, reducing the overhead of managing multiple collections. Features like indexing and aggregation pipelines further enhance the performance of denormalized data models. MongoDB Atlas also supports real-time indexing and query optimization, making it easier to maintain efficient databases.

When should you avoid denormalization?

Avoid denormalization when your application requires frequent updates or strict data consistency. For example, healthcare systems or financial applications benefit more from normalized models to ensure accuracy. Additionally, if storage costs are a concern, normalization minimizes redundancy and reduces storage usage.

Boost MongoDB Query Performance with TapData

Struggling with the complexities of managing denormalized data models in MongoDB? TapData offers real-time data integration and synchronization tools that eliminate the hassle of manual processes. Optimize query performance, reduce redundancy, and maintain data consistency effortlessly.

See Also

Techniques to Enhance SQL Query Performance Efficiently

Methods for Importing and Syncing Redis Data with MongoDB

Guide to Building and Overseeing Materialized Views in MongoDB