Mastering ETL Development: Concepts, Processes, and Best Practices

In today's data-driven world, businesses are constantly seeking ways to gain valuable insights and make informed decisions. One crucial aspect of this process is efficient data integration, and Extract, Transform, Load (ETL) development plays a vital role in achieving it. ETL development enables organizations to extract data from various sources, transform it into a consistent format, and load it into a target system. However, mastering ETL development concepts, processes, and best practices is essential for ensuring data quality, streamlining data integration, and achieving regulatory compliance. In this blog post, we will delve into the importance of ETL development, discuss key concepts and processes, and provide best practices to help businesses master ETL development for efficient data integration.

Data Integration: Consolidating Data for Effective Analysis

Importance of Data Integration

Data integration plays a crucial role in today's data-driven world. It involves combining data from various sources into a single, unified format. This consolidation allows businesses to have a comprehensive view of their operations and customers, leading to effective analysis, reporting, and decision-making.

One of the key benefits of data integration is that it enables businesses to gain insights that would otherwise be difficult to obtain. By bringing together data from different systems and sources, organizations can identify patterns, trends, and correlations that can drive strategic initiatives. For example, by integrating customer data from multiple touchpoints such as sales transactions, website interactions, and social media engagements, businesses can develop a 360-degree view of their customers' preferences and behaviors.

Another advantage of data integration is the ability to improve operational efficiency. When data is scattered across multiple systems or departments, it becomes challenging to access and analyze. By consolidating this data into a unified format, businesses can streamline their processes and eliminate redundant tasks. For instance, integrating inventory data with sales data can help optimize supply chain management by ensuring accurate demand forecasting and inventory replenishment.

Data integration also plays a vital role in ensuring regulatory compliance. Many industries have strict regulations regarding the handling and storage of sensitive information. By consolidating data into a centralized system with proper security measures in place, businesses can ensure compliance with these regulations. This not only reduces the risk of penalties but also enhances customer trust by demonstrating a commitment to protecting their personal information.

Tapdata: Real-time Data Capture and Sync

Tapdata is an innovative solution that offers real-time data capture and sync capabilities for efficient data integration. With Tapdata's advanced technology, businesses can consolidate data from multiple sources in a snap, enabling seamless analysis and decision-making.

One of the key features of Tapdata is its ability to capture real-time data updates from various systems or applications. This ensures that businesses always have access to the most up-to-date information, allowing for accurate and timely analysis. Whether it's sales data, customer interactions, or operational metrics, Tapdata ensures data freshness and accuracy.

Tapdata also excels in its ability to sync data across different platforms and databases. It supports a wide range of data sources, including relational databases, cloud storage services, APIs, and more. This flexibility allows businesses to integrate data from diverse systems without the need for complex coding or manual data transfers.

One of the reasons why industry leaders are already using Tapdata is its flexible and adaptive schema. Traditional data integration solutions often require predefined schemas or rigid structures, making it challenging to accommodate changes in data sources or formats. However, Tapdata's schema-on-read approach allows for dynamic schema adaptation, making data integration a breeze. This means that businesses can easily incorporate new data sources or modify existing ones without disrupting their integration processes.

ETL Tools: Choosing the Right Solution

Overview of ETL Tools

Different ETL tools are available in the market, each with its own features, benefits, and limitations. These tools play a crucial role in the process of extracting, transforming, and loading data from various sources into a target destination such as a data warehouse or a database. By automating these processes, ETL tools save time and reduce manual effort, making them an essential component of any data integration project.

When choosing an ETL tool for your organization, it is important to consider several factors to ensure that you select the right solution that meets your specific requirements. Here are some key considerations:

Scalability

One of the primary factors to consider when choosing an ETL tool is scalability. As your organization grows and the volume of data increases, you need a tool that can handle large datasets efficiently. Look for an ETL tool that offers horizontal scalability by allowing you to distribute the workload across multiple servers or nodes. This ensures that your ETL processes can keep up with the growing demands of your business.

Ease of Use

Another important factor to consider is the ease of use of the ETL tool. Ideally, you want a tool that is intuitive and user-friendly, allowing both technical and non-technical users to easily navigate and operate it. Look for features like drag-and-drop interfaces, visual workflows, and pre-built connectors that simplify the development process and reduce the learning curve for new users.

Integration Capabilities

Consider the integration capabilities of the ETL tool with other systems in your technology stack. The tool should be able to seamlessly connect with various data sources such as databases, cloud storage platforms, APIs, and flat files. Additionally, it should support different data formats like CSV, XML, JSON, etc., ensuring compatibility with your existing infrastructure.

Performance Optimization

Efficient performance is crucial for any ETL process. Look for features in an ETL tool that optimize performance, such as parallel processing, data partitioning, and caching mechanisms. These features can significantly improve the speed and efficiency of your ETL processes, reducing the overall execution time.

Flexibility and Customization

Every organization has unique requirements when it comes to data integration. Look for an ETL tool that offers flexibility and customization options to meet your specific needs. This includes the ability to define custom transformations, create reusable templates or workflows, and integrate with external scripts or code.

In addition to these factors, it is also important to consider the cost of the ETL tool, vendor support and reputation, security features, and future roadmap of the tool. Evaluating multiple options through proof-of-concept projects or trials can help you make an informed decision.

Remember that choosing the right ETL tool is a critical decision that can impact the success of your data integration projects. Take the time to thoroughly evaluate different tools based on your specific requirements and consider seeking expert advice if needed.

By selecting an ETL tool that aligns with your organization's needs and goals, you can streamline your data integration processes, improve efficiency, and unlock valuable insights from your data.

Ensuring Data Quality and Cleansing

Significance of Data Quality

Data quality plays a crucial role in ensuring accurate and reliable analysis. When it comes to ETL development, data cleansing techniques are employed to identify and rectify errors, inconsistencies, and redundancies in the data. By addressing these issues, organizations can improve the overall quality of their data and enhance the effectiveness of their analysis.

Data Validation

One of the key aspects of data cleansing is data validation. This process involves checking the integrity and accuracy of the data by comparing it against predefined rules or criteria. By validating the data, any discrepancies or anomalies can be identified and addressed promptly. This helps in maintaining a high level of data quality throughout the ETL process.

Standardization

Another important practice for ensuring data quality is standardization. Inconsistent formats, units, or naming conventions can lead to confusion and inaccuracies during analysis. Therefore, it is essential to standardize the data by applying consistent formatting rules and guidelines. This includes standardizing date formats, numerical representations, and textual descriptions. By doing so, organizations can ensure that their data is uniform and easily interpretable.

Deduplication

Deduplication is a critical step in data cleansing that involves identifying and removing duplicate records from datasets. Duplicate entries not only consume unnecessary storage space but also introduce inaccuracies in analysis results. By implementing deduplication techniques, organizations can eliminate redundant information and maintain a clean dataset for analysis purposes.

In addition to these best practices, there are various tools available that can assist in ensuring data quality during ETL development. These tools provide functionalities such as automated validation checks, standardized formatting options, and advanced deduplication algorithms.

Importance of Data Cleansing

Data cleansing is not just about improving the accuracy of analysis; it also has several other benefits for organizations:

Enhanced Decision-Making

By ensuring high-quality data through effective cleansing techniques, organizations can make more informed decisions based on reliable insights. Clean and accurate data provides a solid foundation for decision-making processes, enabling organizations to identify trends, patterns, and correlations with confidence.

Increased Operational Efficiency

Data cleansing helps in streamlining business operations by eliminating unnecessary data and reducing the risk of errors. By removing duplicate or irrelevant records, organizations can optimize storage space and improve system performance. This leads to faster processing times and increased operational efficiency.

Improved Customer Experience

Clean and accurate data is essential for delivering personalized experiences to customers. By ensuring data quality through effective cleansing techniques, organizations can gain a better understanding of their customers' preferences, behaviors, and needs. This enables them to tailor their products or services accordingly, resulting in improved customer satisfaction and loyalty.

Optimizing ETL Performance

Techniques for ETL Performance Optimization

When it comes to ETL (Extract, Transform, Load) processes, optimizing performance is crucial for efficient data integration and analysis. By implementing various techniques, you can significantly improve the speed and efficiency of your ETL workflows. Here are some proven strategies to optimize ETL performance:

Parallel Processing

One of the most effective ways to enhance ETL performance is by leveraging parallel processing. This technique involves dividing a large task into smaller subtasks that can be executed simultaneously on multiple processors or threads. By distributing the workload across multiple resources, parallel processing allows for faster execution of ETL workflows.

Parallel processing offers several benefits in terms of performance optimization. Firstly, it reduces the overall execution time by enabling multiple tasks to run concurrently. Secondly, it maximizes resource utilization by efficiently utilizing available computing power. Lastly, it enhances scalability as additional resources can be easily added to handle larger datasets or increased workloads.

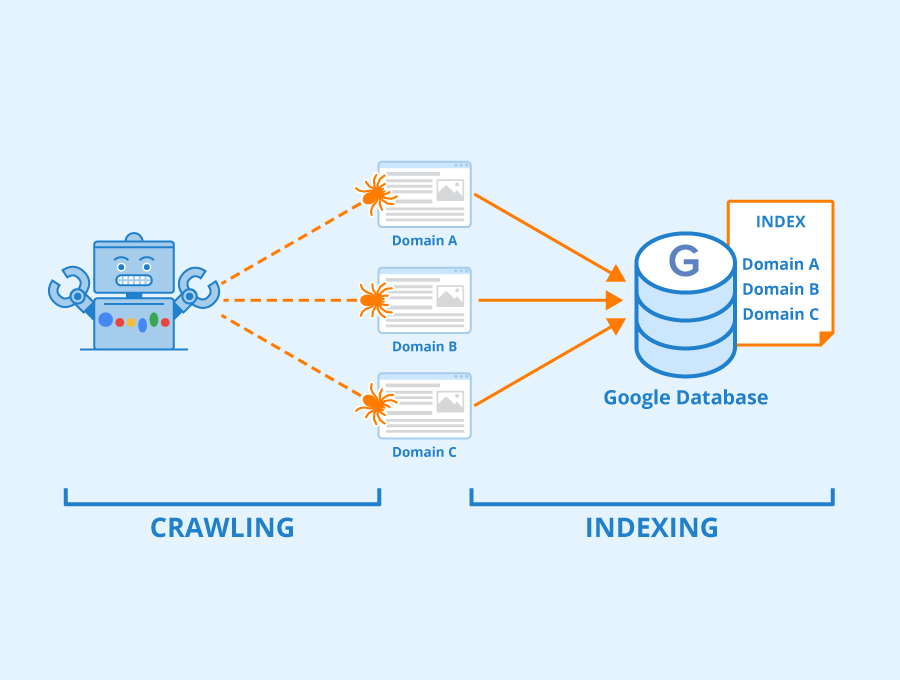

Indexing and Caching

Another technique to consider for optimizing ETL performance is indexing and caching. Indexing involves creating indexes on frequently accessed columns in your data sources. These indexes allow for faster data retrieval during extraction and transformation processes.

By strategically selecting columns for indexing based on their relevance and frequency of access, you can significantly improve the speed of data retrieval operations. Additionally, caching frequently accessed data in memory can further enhance performance by reducing disk I/O operations.

Optimizing Data Extraction and Transformation Processes

Efficiently extracting and transforming data is essential for optimal ETL performance. There are several strategies you can employ to optimize these processes:

Selective Extraction: Instead of extracting all data from a source system, identify only the relevant subsets needed for analysis. This reduces unnecessary data transfer and improves overall performance.

Filtering and Aggregation: Apply filters early in the extraction process to eliminate irrelevant records before they enter the transformation phase. Additionally, perform aggregation operations during extraction to reduce the volume of data that needs to be processed.

Parallel Transformation: Similar to parallel processing, you can also parallelize the transformation phase by dividing the workload across multiple processors or threads. This allows for faster execution and improved performance.

Resource Optimization

Optimizing resource usage is crucial for efficient ETL performance. By carefully managing system resources, you can minimize bottlenecks and ensure smooth execution of your workflows. Here are some resource optimization techniques to consider:

Memory Management: Allocate sufficient memory for ETL processes to avoid excessive disk I/O operations. Utilize in-memory processing whenever possible to reduce latency and improve performance.

Disk Space Management: Regularly monitor and manage disk space utilization to prevent storage-related issues that can impact ETL performance. Implement archiving or compression techniques to optimize disk space usage.

CPU Utilization: Monitor CPU usage during ETL processes and identify any potential bottlenecks. Adjust task scheduling and resource allocation to maximize CPU utilization and minimize idle time.

Error Handling and Logging

Challenges in Error Handling

Errors are an inevitable part of any ETL process. They can occur at various stages, including data extraction, transformation, and loading. These errors can have a significant impact on the overall integrity and accuracy of the data being processed. Therefore, it is crucial to implement effective error handling mechanisms and logging systems to identify and resolve these errors promptly.

One of the primary challenges in error handling is detecting errors as they occur. With large volumes of data being processed, it can be challenging to identify specific errors without proper monitoring and logging systems in place. This is where implementing robust error detection mechanisms becomes essential. By continuously monitoring the ETL processes and capturing relevant error information, developers can quickly pinpoint the root cause of any issues that arise.

Another challenge in error handling is ensuring data integrity during the recovery process. When an error occurs, it is crucial to recover from it while maintaining the overall integrity of the data. This involves implementing appropriate error recovery strategies that allow for seamless continuation of the ETL process after an error has been resolved. For example, if a transformation error occurs, it may be necessary to roll back any changes made during that particular step and restart from a known good state.

Implementing a comprehensive logging system is also vital for effective error handling. A logging system allows developers to capture detailed information about each step of the ETL process, including any errors encountered along the way. This information can then be used for troubleshooting purposes or for auditing and compliance requirements.

Best Practices in Error Handling

To ensure efficient error handling in your ETL processes, consider following these best practices:

Implement comprehensive error detection: Set up mechanisms to detect errors at every stage of the ETL process. This includes validating source data before extraction, performing data quality checks during transformation, and verifying successful loading into the target system.

Capture detailed error information: When an error occurs, capture as much relevant information as possible. This includes the error message, timestamp, affected data, and any other contextual details that can help in troubleshooting and resolving the issue.

Define clear error handling policies: Establish clear guidelines for how errors should be handled within your ETL processes. This includes defining the severity levels of different types of errors and specifying the appropriate actions to be taken for each level.

Implement automated error recovery: Whenever possible, automate the error recovery process to minimize manual intervention. This can involve automatically retrying failed steps, rolling back changes when necessary, or triggering notifications to alert stakeholders about critical errors.

Regularly monitor and review error logs: Continuously monitor the error logs generated by your ETL processes to identify any recurring patterns or trends. This can help in identifying potential areas for improvement and proactively addressing issues before they escalate.

Document and communicate error handling procedures: Document your error handling procedures and ensure that they are easily accessible to all relevant stakeholders. This helps in maintaining consistency across different projects and ensures that everyone involved understands how errors should be handled.

By implementing these best practices, you can significantly improve the efficiency and reliability of your ETL processes. Effective error handling not only helps in maintaining data integrity but also minimizes downtime and ensures that critical business insights are delivered on time.

Designing Efficient Data Warehouses

Principles of Data Warehouse Design

Designing an efficient data warehouse is crucial for ensuring optimal performance and enabling effective data analysis. A well-designed data warehouse not only improves data accessibility but also enhances query performance, allowing organizations to derive valuable insights from their data. In this section, we will explore the key principles of data warehouse design that can help you create a robust and high-performing solution.

Data Modeling

One of the fundamental aspects of data warehouse design is data modeling. It involves organizing and structuring the data in a way that facilitates efficient storage and retrieval. When designing your data model, consider the specific requirements of your ETL processes and analytical queries. Use techniques like star schema or snowflake schema to simplify complex relationships between entities and optimize query performance.

Indexing

Indexing plays a vital role in improving the speed of data retrieval operations in a data warehouse. By creating indexes on frequently queried columns, you can significantly reduce the time taken to fetch relevant records. However, it's important to strike a balance between the number of indexes and their impact on write operations. Over-indexing can lead to slower ETL processes, so carefully analyze your query patterns and choose appropriate columns for indexing.

Partitioning

Partitioning involves dividing large tables into smaller, more manageable segments based on specific criteria such as date ranges or key values. This technique improves query performance by allowing the database engine to scan only relevant partitions instead of scanning the entire table. Partitioning also simplifies maintenance tasks like archiving or deleting old data. Consider partitioning your tables based on commonly used filters in analytical queries to achieve better performance.

Denormalization

In traditional relational databases, normalization is essential for eliminating redundancy and maintaining data integrity. However, in a data warehouse environment where read performance is critical, denormalization can be beneficial. Denormalizing certain tables by combining related attributes can improve query response time by reducing join operations. Carefully evaluate your data access patterns and consider denormalization where appropriate to optimize performance.

Data Compression

Data compression techniques can significantly reduce the storage footprint of your data warehouse, leading to cost savings and improved query performance. By compressing data at the storage level, you can store more information in a limited space and minimize disk I/O operations. However, it's important to strike a balance between compression ratios and CPU overhead. Experiment with different compression algorithms and settings to find the optimal configuration for your workload.

Data Archiving

Over time, a data warehouse accumulates vast amounts of historical data that may not be frequently accessed but still holds value for analysis. Implementing a data archiving strategy allows you to move older or less frequently used data to separate storage tiers, freeing up resources in your primary database. This approach helps improve query performance by reducing the amount of data that needs to be scanned during analytical queries.

Incremental ETL: Updating Only the Changed Data

Benefits of Incremental ETL

Incremental ETL, also known as change data capture (CDC), is a technique used in data integration to update only the changed or new data, rather than processing the entire dataset. This approach offers several benefits that can greatly enhance the efficiency and effectiveness of ETL processes.

1. Reduced Processing Time and Resource Requirements

One of the primary advantages of incremental ETL is its ability to significantly reduce processing time and resource requirements. By updating only the changed or new data, rather than processing the entire dataset, organizations can save valuable time and computing resources. This is particularly beneficial when dealing with large volumes of data or when working with real-time or near-real-time data integration scenarios.

2. Improved Data Integration Efficiency

Incremental ETL enables organizations to achieve more efficient data integration by focusing on the changes that have occurred since the last ETL process was executed. Instead of extracting, transforming, and loading all the data again, only the modified or newly added records are processed. This not only saves time but also minimizes the risk of errors that may occur during repetitive processing.

3. Real-Time Data Integration Support

In today's fast-paced business environment, real-time data integration has become increasingly important for organizations to make timely and informed decisions. Incremental ETL plays a crucial role in supporting real-time data integration by capturing and processing changes as they occur. This allows organizations to have up-to-date information available for analysis and reporting purposes without delay.

4. Near-Real-Time Reporting

With traditional batch-oriented ETL processes, there is often a delay between when changes occur in source systems and when they are reflected in reports or analytics dashboards. However, with incremental ETL, near-real-time reporting becomes possible. By continuously capturing and processing changes in source data, organizations can generate reports that reflect the most recent updates almost instantaneously. This empowers decision-makers with the most current information, enabling them to respond quickly to changing business conditions.

5. Efficient Data Synchronization

Incremental ETL is particularly useful when it comes to synchronizing data between different systems or databases. By identifying and tracking changes in source data, organizations can ensure that updates are accurately propagated to the target systems. This helps maintain data consistency and integrity across multiple platforms, reducing the risk of discrepancies or conflicts.

6. Scalability and Flexibility

As organizations deal with ever-increasing volumes of data, scalability and flexibility become critical factors in ETL processes. Incremental ETL provides a scalable solution by focusing on processing only the changed or new data, regardless of the overall dataset size. This allows organizations to handle growing data volumes without sacrificing performance or incurring excessive resource costs.

Testing and Validating ETL Processes

Importance of ETL Testing and Validation

Testing and validating ETL (Extract, Transform, Load) processes is crucial to ensure the accuracy and reliability of data integration. ETL processes involve extracting data from various sources, transforming it into a consistent format, and loading it into a target system such as a data warehouse. Rigorous testing procedures are necessary to identify any issues or discrepancies in the data during these complex processes.

Ensuring Data Accuracy and Reliability

One of the primary reasons for conducting ETL testing is to ensure that the data being integrated is accurate and reliable. Inaccurate or inconsistent data can lead to incorrect analysis and decision-making. By thoroughly testing the ETL processes, organizations can have confidence in the quality of their data, which is essential for making informed business decisions.

Data Reconciliation

Data reconciliation is an important aspect of ETL testing. It involves comparing the source data with the transformed data to ensure that there are no discrepancies or missing records. This process helps identify any issues that may arise during the transformation phase, such as incorrect mappings or transformations. By reconciling the data at each stage of the ETL process, organizations can detect and rectify any errors before they impact downstream systems.

Performance Testing

Performance testing is another critical component of ETL testing. It involves assessing how well the ETL processes perform under different conditions, such as varying volumes of data or concurrent users. By simulating real-world scenarios, organizations can identify potential bottlenecks or performance issues that may affect the efficiency and speed of their ETL processes. Performance testing helps optimize resource utilization and ensures that the ETL processes can handle large volumes of data without compromising performance.

Error Handling Scenarios

ETL processes often encounter errors due to various factors such as invalid data formats, network failures, or system crashes. Effective error handling mechanisms are essential to minimize disruptions and ensure smooth operation even in exceptional circumstances. During ETL testing, organizations should simulate different error scenarios to validate the error handling capabilities of their ETL processes. This includes testing how well the system recovers from errors, logs error details for troubleshooting, and notifies relevant stakeholders.

Automation and Efficiency

To streamline the ETL testing process and improve efficiency, organizations can leverage ETL testing tools and frameworks. These tools automate repetitive tasks, such as data reconciliation and performance testing, reducing manual effort and saving time. By automating the testing processes, organizations can execute tests more frequently and consistently, ensuring that any issues are identified early on. Additionally, these tools provide comprehensive reports and dashboards that enable stakeholders to track the progress of testing activities and monitor the overall health of their ETL processes.

Best Practices for ETL Testing

To ensure effective ETL testing, organizations should follow some best practices:

Define clear test objectives: Clearly define what needs to be tested and establish measurable criteria for success.

Use representative test data: Test with a variety of data types and volumes that closely resemble real-world scenarios.

Implement end-to-end testing: Test the entire ETL process from data extraction to loading into the target system.

Conduct regression testing: Regularly retest previously validated components to ensure that changes or updates have not introduced new issues.

Collaborate with stakeholders: Involve business users, data analysts, and IT teams in the testing process to gather diverse perspectives and validate requirements.

Conclusion

In conclusion, mastering ETL development is essential for businesses seeking to harness the power of their data. By understanding the concepts and processes involved in ETL, organizations can improve data quality, enhance efficiency and scalability, streamline data integration, and ensure compliance with data governance regulations.

Following best practices in ETL development is crucial for achieving accurate and reliable data. By adhering to these practices, organizations can save valuable time and resources while making informed decisions based on trustworthy insights. With accurate and reliable data at their disposal, businesses can gain a competitive edge and drive success.

To unlock the full potential of your data and drive business success, it is imperative to start mastering ETL development today. By investing in training and resources to improve your understanding of ETL concepts and processes, you can effectively integrate and consolidate data from various sources. This will enable you to make more informed decisions, identify valuable insights, and optimize your operations.

Don't miss out on the opportunity to leverage your data effectively. Start mastering ETL development now and take your business to new heights.

See Also

ETL Mastery: Essential Techniques and Best Practices

SQL Server ETL Mastery: Best Practices and Proven Tips

Optimizing Snowflake ETL: Effective Tips for Efficient Data Processing

ETL Development Demystified: Step-by-Step Tutorials and Guides

Unveiling the Best Open Source ETL Tools: Reviews and Rankings